The artificial intelligence chip startup Groq has secured $640 million in a new funding round led by Blackrock. Participants include Neuberger Berman, Type One Ventures, Cisco, KDDI, and Samsung Catalyst Fund. This financing brings Groq’s total funding to over $1 billion and values the company at $2.8 billion, doubling its previous valuation of $1 billion in April 2021.

The recent capital injection is a significant victory for Groq, which initially sought to raise $300 million at a slightly lower valuation. Additionally, the startup announced that Yann LeCun, Meta’s chief AI scientist, will join as a technical advisor, and Stuart Pann, former head of Intel’s foundry business and ex-CIO of HP, will be the new COO. LeCun’s inclusion is notable since Meta has invested in its own AI chips, making him a valuable ally for Groq in a highly competitive market.

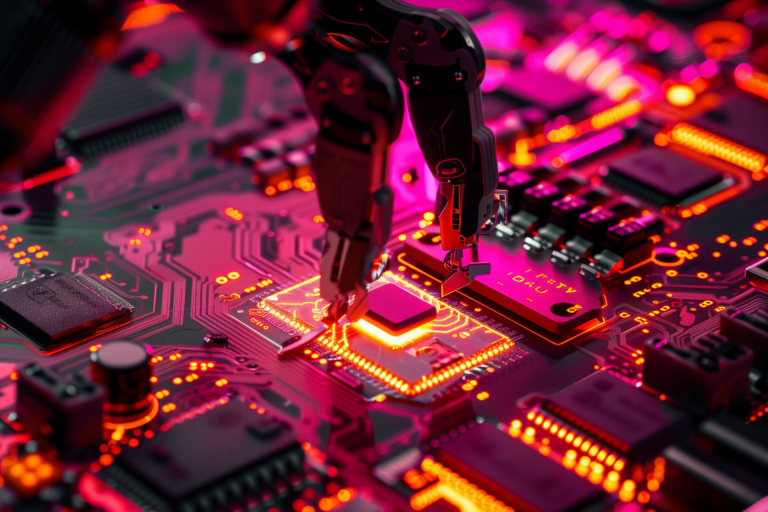

Groq, which emerged in 2016, is developing an inference engine called LPU (Language Processing Unit). It promises to run generative AI models, similar to OpenAI’s ChatGPT and GPT-4, at ten times the speed and one-tenth the energy consumption of conventional processors.

Groq offers an LPU-powered development platform called GroqCloud, which includes open models like Meta’s Llama 3.1, Google’s Gemma, OpenAI’s Whisper, and Mistral’s Mixtral. It also provides an API allowing clients to use its chips in cloud instances. As of July, GroqCloud had over 356,000 developers, with part of the funds raised to scale capacity and add new models and features. "Many of these developers are in large enterprises," Stuart Pann told TechCrunch. "More than 75% of Fortune 100 companies are represented."

The AI chip market

As generative AI continues to grow, Groq faces intense competition from other AI chip makers and Nvidia, the undisputed leader in the sector. Nvidia controls between 70% and 95% of the AI chip market and has promised to release a new chip architecture every year. It is also creating a business unit focused on designing custom chips for cloud computing companies.

In addition to Nvidia, Groq competes with Amazon, Google, and Microsoft, all of which are developing custom chips for cloud AI workloads. Amazon offers its Trainium, Inferentia, and Graviton processors through AWS; Google Cloud uses its TPUs and, soon, its Axion chip; and Microsoft has launched Azure instances for its Cobalt 100 CPU, with Maia 100 AI Accelerator instances on the way.

Groq's strategies and future

To secure its position, Groq has made significant strategic moves. In March, it acquired Definitive Intelligence to form Groq Systems, a business unit focused on providing AI solutions to government agencies and sovereign nations. Recently, Groq partnered with Carahsoft to sell its solutions to public sector clients and signed a letter of intent to install tens of thousands of LPUs in the Norwegian data center of Earth Wind & Power.

Groq is also collaborating with Saudi consultancy Aramco Digital to install LPUs in future Middle Eastern data centers. In addition to building client relationships, the Mountain View, California-based Groq is advancing to the next generation of its chip. Last August, the company announced that it would contract Samsung’s foundry business to manufacture 4 nm LPUs, expected to offer performance and efficiency gains over Groq’s first-generation 13 nm chips.

The company plans to deploy more than 108,000 LPUs by the end of the first quarter of 2025, solidifying its bid to become a formidable competitor in the growing AI chip market, estimated to reach $400 billion in annual sales over the next five years.